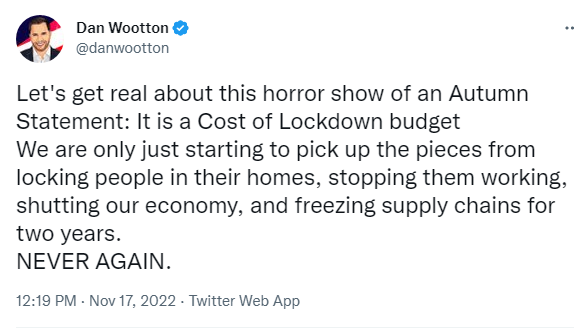

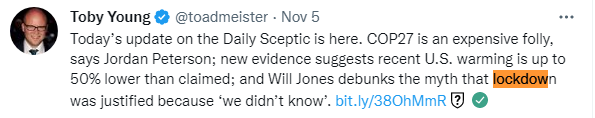

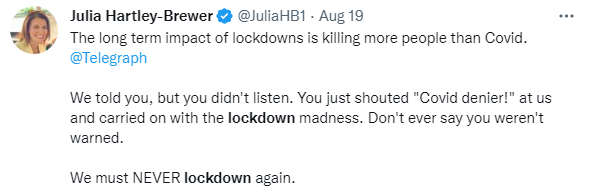

Ever since the start of the pandemic the country has been plagued by not one, but two viruses: Covid and lockdown scepticism. Here are some tweets from folk who have caught a bad dose of the latter.

https://twitter.com/toadmeister/status/1588791977163702272

https://twitter.com/JuliaHB1/status/1560531529486458880

I think for these unfortunates the disease may be fatal, but I want to encourage others to vaccinate themselves against this virulent strain of stupidity by reminding them of what really happened in the pandemic in the UK. As well as talking about lockdown strategies I will quote widely from the book Spike, by Jeremy Farrar, to show how shambolic the UK Government was in addressing the pandemic. I thoroughly recommend everyone reads this account of the pandemic from the inside.

Jeremy Farrar is a medical researcher for the Wellcome Trust and a former professor of tropical medicine. To quote him from the first chapter:

I know what it is like to deal with the science and politics of a new disease. I helped to alert the world to a potentially serious outbreak of H5N1 bird flu in Vietnam in 2004, along with colleagues Tran Tinh Hien, Nguyen Thanh Liem and Peter Horby, then an epidemiologist working for the World Health Organization in Hanoi and now an Oxford University scientist.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 8). Profile. Kindle Edition.

As an expert in new diseases his testimony is revealing of the facts surrounding the Covid pandemic; he was a member of SAGE until November 2021, which advises Government in emergencies, and did so in this one. Consequently he knows a lot about the ins and outs of what actually happened in 2020, and beyond, and not what people like to pretend happened.

Before the pandemic struck the UK, Farrar wrote in an email on Saturday 25 January 2020:

This cannot be contained in China. and will become a global pandemic over the next few days/weeks of uncertain severity. Since Influenza 1918 things have never turned out quite as bad as they appear early on … but this is the first time since SARS I have been worried … I worry [the UK government] are underestimating the potential impact.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 50). Profile. Kindle Edition.

Still talking about January 2020 he writes:

I’ve known Chris [Whitty, Chief Medical Adviser to the UK Government] for years too: he also trained in infectious diseases and did a period of study in Vietnam. The global health and infectious disease community can sometimes adopt a slightly weary attitude of ‘We’ve seen it all before and these things are never as bad as you think.’ And that was Chris, initially: he wanted to be much more cautious, to wait and weigh everything before taking action. The lesson from every epidemic is that if you wait until you know everything, then you are too late. If you fall behind an epidemic curve, it is extraordinarily hard to get back in front of it. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 89). Profile. Kindle Edition.

Further:

[Whitty] talked about the outbreak as a marathon not a sprint. In a sense, outbreaks are marathons, but there are times in every long-distance race when you need to go fast. That go-slow outlook pervaded much of the thinking in January and February 2020 in the UK, even though all the information that had accumulated by the end of January should have set off the loudest of sirens. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 90). Profile. Kindle Edition.

So it was clear that Farrar felt that the Chief Medical Adviser to the UK government was initially too cautious about this outbreak.

By 15 February, the outbreak in China was seen as uncontainable, according to the minutes [of a SAGE meeting]. On 18 February, it was minuted that Public Health England could perhaps cope with five coronavirus cases a week, generating 800 contacts that would need tracing. That could be scaled up to 50 cases a week and 8,000 contacts – but, if sustained transmission took off, contact tracing would become unviable. Today, it seems unbelievable that, thanks to a decade of austerity, the world’s fifth richest economy would be so woefully poised to respond and scale up fast to a public health emergency. The running down of public health in the decade before 2020 helped to turn what would have been a serious challenge into an ongoing tragedy. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 92-93). Profile. Kindle Edition.

Quite right; successive Tory Governments have run down the NHS to promote their preferred method of delivering health services - privately. And this left the UK hopelessly exposed to a virulent virus with a negligent Tory Government in power.

Around this time herd immunity became a possible approach to the virus. Boris Johnson said:

...one of the theories is, that perhaps you could take it on the chin, take it all in one go and allow the disease, as it were, to move through the population, without taking as many draconian measures.

...indicating that someone had advised him this was a possible approach. Farrar writes:

Pursuing a fast ‘herd immunity by natural infection’ strategy would not have been ‘following the science’, as was so often claimed at that time, but doing the opposite. It went against the science. We have very little herd immunity to circulating coronaviruses that cause the common cold, which is why we are repeatedly infected. We have no idea if there is any long-lasting immunity to the related coronaviruses SARS-CoV-1 and Middle East respiratory virus. Back then, because of our lack of immunological knowledge, we could not have assumed herd immunity by natural infection was even viable for Covid-19, let alone safe. It is not a twenty-first-century public health plan. The idea of pursuing such an idea three months into a new disease beggared belief. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 105-106). Profile. Kindle Edition.

And:

As far as I was concerned, the way forward in late-February was: to accept elimination was not possible (because of early, widespread seeding); reduce transmission; get R below 1; flatten the peak; stay within NHS limits; buy time to put measures like testing, tracing and isolation in place; increase NHS capacity; and to develop drugs and vaccines.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 106). Profile. Kindle Edition.

By March (UK still not in lockdown!), Farrar was seriously worried about the Government's approach. After seeing some SAGE minutes that seemed to downplay how perilous the situation was, on 14th March 2020 he wrote an email to Patrick Vallance (the Government's Chief Scientific Adviser) and Chris Whitty:

Are you content that these minutes convey:

The need for urgency that was palpable at the meeting

The speed that this is unfolding

We are further ahead in the epidemic – not ‘we may be’

The framing of the modelling, and the behavioural science comes with a lot of uncertainties and caveats

Impatience that the diagnostic testing lags behind and that there are ‘plans’ to ramp up to 1000 tests/week at some point?

Not sure when the decision to stop testing in the community was made – is that right, no more testing of community non-hospitalised cases?

The significant lag between any decisions and impact, such a time lag in a fast-moving epidemic is concerning

The need for social distancing interventions to be implemented as soon as policy could be written and communicated – very soon

Need for comprehensive suite of social distancing interventions

Increase in capacity in the NHS – an operational issue but clearly a critical one

In next 24 hours

* Act early and decisively

* Open access to all the evidence being used within UKG [UK government]

* Shift in policy on social distancing with immediate effect and across all – working from home whenever possible, work places, mass gatherings, religious meetings, restaurants/bars/cinemas, public transport, advice to shops, limit number of people in shops and other places – etc,

* Home quarantine for suspected cases and family for 14 days

* ‘Shielding’ of vulnerable populations

* Economic support for businesses, self-employed etc – Fair and equity

* If possible keep schools open until Easter (2 weeks) to avoid staffing issues within NHS – but within schools actions such as pushing hand washing, no assemblies, break up classes into smaller units, no gatherings etc etc

* Massive increase capacity in the NHS

* Massively increased diagnostic capacity

* Support through G7 for R&D and Manufacturing of Diagnostics, Therapeutics and Vaccines

* Clear lines of decision making and control – may not be a time to keep a consensus if that means delaying inevitable decisions

* Increase the capacity, demands and expectations on DHSC, PHE, NHS and single line of decision making... I believe these changes needed in the next 24 hours – doubling time now 2.5–3 days

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 118-119). Profile. Kindle Edition.

Even Dominic Cummings (Chief Adviser to the PM) and his data scientists were getting worried. Farrar quotes Cummings:

‘On that Friday night, Ben Warner said to said to me and Imran [Imran Shafi, Boris Johnson’s private secretary], “I think this herd immunity plan is going to be a catastrophe. The NHS is going to be destroyed. We should try to lock everything down as fast as we possibly can.”’

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 121). Profile. Kindle Edition.

Farrar adds "That was my view, and I am sure most people on SAGE agreed." Then:

Timothy Gowers [British mathematician and Fields medallist] told Cummings in a series of emails that, in his view, the best strategy was to go in hard and early on interventions like lockdowns, because of exponential growth in case numbers. Timothy explains the reasoning he gave to Cummings: ‘I felt very strongly at the time that a herd immunity strategy was wrong – a simple back-of-the-envelope calculation made it clear that in order to implement it, either hospitals would have to be massively overwhelmed or the strategy would take years, and depend on reinfection not being possible … Given that a herd immunity strategy couldn’t possibly work, lockdown was inevitable, and that given that lockdown was inevitable, it was madness not to start immediately.’ All it needed, Timothy says, was an understanding of basic mathematics. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 121). Profile. Kindle Edition.

This had been my (pretty worthless, admittedly) opinion since I had heard what was happening in Italy. We needed to do everything we could to reduce the R number - it was just maths (and still is). I told my boss I was going to work from home, and urged him to send everyone home. We locked down before the UK Government did, as did the Wellcome Trust (what did they know?).

Around this time the UK stopped community testing, despite the WHO urging every country to 'Test, test, test'. Farrar says in parenthesis "Cummings claims it was dropped as part of the herd immunity plan" (p124). Who the hell was pushing herd immunity if not SAGE or Cummings?

I remember Jenny Harries, England’s deputy chief medical officer, saying publicly that the UK did not need to follow the WHO’s advice because it did not apply to high-income countries. It was a dreadful thing to say. There was no public acknowledgement that abandoning community testing was a decision based not on public health or science considerations but on a lack of testing capacity. It meant we were flying blind when it came to transmission outside of hospitals, in the community and in care homes.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 124). Profile. Kindle Edition.

Hearing now about the turmoil inside Number 10 that weekend adds weight to what I and many others felt at the time: there was huge uncertainty about who was ultimately taking responsibility for the pandemic response. Boris Johnson as prime minister seemed more like an old-fashioned chairman than a chief executive and was being advised by Dominic Cummings and other figures in Number 10. It was unclear who was pulling the strings and who had the authority to ask, let alone compel, others to act. Cummings shares the view that general confusion reigned in that period: ‘Nothing worked. The preparations were a farce, the plan was a catastrophe and had to be abandoned, and the management of many crucial things was a disaster.’ (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 125). Profile. Kindle Edition.

And his boss had not been paying attention. Cummings adds: ‘The PM spent most of February dealing with a combination of divorce, his current girlfriend wanting to make announcements about their relationship, an ex-girlfriend running around the media, financial problems exacerbated by the flat renovation, his book on Shakespeare, and other nonsense, because obviously he never took the whole thing seriously.’

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 125). Profile. Kindle Edition.

Time lags are a consequence, in part, of piecemeal decision-making. There is an understandable desire for consensus as critical decisions are being made. Then, that advice must be translated into action. Each step builds in a delay. But time is the one thing you do not have in a fast-moving epidemic. Delays matter when an epidemic is doubling every three days. For one thing, you are always viewing data in the rear-view mirror. The number of cases, hospitalisations and deaths today reveals the state of the epidemic days or weeks ago, not the state of the epidemic today. Today’s data reflects the past.

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 126-127). Profile. Kindle Edition.

Cummings knows now what should have happened: ‘In retrospect, it’s obvious that if we locked down a week earlier that would have been better; two weeks earlier would have been better still … It’s just unarguable from my perspective that everything we did should have happened earlier. Fewer people would have died, lockdown would have been shorter and we would have had less economic destruction.’ He believes that tens of thousands, even hundreds of thousands, more people would have died if no action had been taken for a fortnight, which he claims could ‘easily have happened given the then current plans’. At his appearance before MPs, Cummings apologised for the fact that he and others had let the country down and that tens of thousands of people died who did not need to die. That people died needlessly is, alas, unarguable from any insider’s perspective. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 127-128). Profile. Kindle Edition.

Cummings thought that the herd immunity strategy came from SAGE, but as an insider Farrar disagrees:

Cummings emphasises that Patrick Vallance and Chris Whitty were always scrupulous in their presentation of SAGE discussions: ‘They were always careful to say, “This is not policy advice”, and I was careful to say, “We understand, you’re not telling us what policy should be, that’s our job. But what you are saying very explicitly is the Chinese plan will fail, the Singapore plan will fail, the Taiwan plan will fail and everyone’s basically going to have to do herd immunity,” right?’ I do not believe that Chris and Patrick, or SAGE, would ever have knowingly agreed to that. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 130). Profile. Kindle Edition.

I do not believe that behavioural scientists on SAGE ever claimed that the public would not accept lockdowns. The scientists themselves have rejected Cummings’ assertions [that the behavioural scientists said the public would not accept lockdowns] and point out that it was not their role to suggest interventions, only to advise on how people should be encouraged to adhere to whatever interventions that ministers chose. And I was told that ministers were universally opposed to even household isolation. We understood that people would comply with measures if the rationale was explained, the reasons were transparent, they were applied consistently, and they were not disadvantaged by complying. It is still the case that the government must own the strategy it pursued, rather than hide behind the scientists. Cummings says he thought these ideas originated on SPI-B or SAGE, but I dispute this. It should be noted that the UK government does have its own Behavioural Insights team and David Halpern (head of that team) gave the first interview mentioning herd immunity. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 130-131). Profile. Kindle Edition.

I think, reading between the lines here, the herd immunity approach was politically acceptable to the right wing nuts that made up the Brexit Government, and particularly Boris Johnson, who was not at his best when delivering serious news. He was a boosterish libertarian, and not a safe pair of hands at a time when attention to detail was vital.

Still, we had clear evidence that the lockdowns in China and other countries were bending the curve the right way. We were being chastened daily by the tales from critical care units in Italy; on 15 March, cases in Italy had risen to nearly 25,000, with more than 1,800 deaths. It was surely time for action. If we needed yet another compelling reason to act, ‘Report 9’ fitted the bill. This paper was a pivotal piece of epidemiological modelling led by Neil [Ferguson]’s team. The team had modelled how the epidemic might progress if left to its own devices. If no preventive measures were taken, and if people did not change behaviour of their own accord – an ‘unmitigated’ epidemic – Neil’s team calculated that around 80 per cent of the population would become infected. With an overall infection fatality rate of 0.9 per cent, 510,000 people would die in the UK and more than 2 million in the US. For comparison, there were an estimated 384,000 deaths among British forces in the Second World War. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 131-132). Profile. Kindle Edition.

This was the point that Steven Riley had been pushing, in raising the prospect of a China-style lockdown on the basis of the precautionary principle in his simple modelling note to SPI-M on 10 March. The strategy needed to switch immediately from mitigation to suppression – to try as hard as possible to stop the virus from circulating.

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 132-133). Profile. Kindle Edition.

That evening [March 16th], I expected to hear that the shutters were going down everywhere in the UK, as we had done at Wellcome. Instead, when Boris Johnson appeared on TV, he asked people to work from home, to stop non-essential travel and avoid pubs, bars, restaurants and mass gatherings. Venues could remain open. The measures were being advised rather than mandated, and the PM, a self-confessed libertarian, made clear his reluctance to instigate them. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 134). Profile. Kindle Edition.

So it's clear from a SAGE insider's view that lockdown should have occurred on March 16th (if not before) but the Government did not act.

I was shocked: rather than taking a tough decision, the PM ducked it. Johnson, in fact, did exactly what SAGE had cautioned against at the 25 February meeting. Social distancing measures should be mandatory, not optional. A prime minister cannot ask people to lock down if they feel like it. No countries in South East Asia had done it this way, for good reason: that is not the way these sorts of public health measures work. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 134). Profile. Kindle Edition.

Farrar does have a mea culpa, which I hope SAGE learns from (they don't seem to have):

It is a fair criticism that the minutes from SAGE meetings do not explicitly show advisers calling for stronger action. I regret that SAGE was not blunter in that regard. We should have been, especially during that key weekend between Friday 13 and Monday 16 March; my Saturday email on 14 March was a late-night attempt to lay out the argument much more plainly and directly to Patrick and Chris that action, namely lockdown, was needed within 24 hours.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 134). Profile. Kindle Edition.

The middle of March 2020 was a critical time period in the failure of the UK response. Frankly, I was amazed that the government had not moved more definitively. By this time, Wellcome, along with many organisations, had locked its doors – after a month of contingency planning. With its lack of panic, the UK looked like an international outlier. Ministers, as well as Patrick and Chris, said the right measures would be taken at the right time. The belief was that people would only be able to comply with stringent social distancing measures for a limited period of time before ‘behavioural fatigue’ set in – and it would be dangerous if people threw in the towel just before the epidemic peaked.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 136). Profile. Kindle Edition.

Behavioural fatigue seems to have been a peripheral idea promoted beyond any merit or evidence. Behavioural scientists on SAGE had acknowledged that people might struggle to comply with restrictions, but had also, importantly, cautioned this was an intuitive observation, not one based on evidence. Besides, the danger was escalating and options were running out. On 16 March 2020, nearly 700 psychologists and behavioural researchers released an open letter to the government asking for the relevant evidence on behavioural fatigue to be disclosed or, if there was no evidence, to change tack. They were ‘not convinced that enough is known about “behavioural fatigue” or to what extent these insights apply to the current exceptional circumstances. Such evidence is necessary if we are to base a high-risk public health strategy on it...’ It was a constructive intervention. So, by 16 March, the judgement from outbreak veterans, epidemiological modellers and the UK’s behavioural science community had reached a consensus: act now.

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 136-137). Profile. Kindle Edition.

SAGE advised that schools should be closed as soon as possible, except possibly for the children of key workers; pubs, restaurants, other hospitality and leisure should shut, along with indoor workplaces. Any interventions should happen sooner rather than later, we advised once again. Strikingly, a YouGov poll showed that 16 per cent of school pupils had not shown up the week beginning 16 March. Parents were already ahead of the politicians. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 138-139). Profile. Kindle Edition.

People's behaviour may be the most obvious reason why the lockdown sceptics are wrong. Even if a lockdown isn't imposed, millions of people will withdraw in the face of a deadly virus - that is surely human nature. Of course millions won't be able to by circumstance, so there is a deadly lottery to be played out depending on one's lifestyle. It's horrifyingly unfair to inflict mass death on one part of the population (predominantly the poorer part) whilst others try to isolate.

A COBR meeting was scheduled for Friday 20 March 2020. Astonishingly, the PM reportedly skipped it. It was not until the next SAGE meeting, on Monday 23 March, after ten wasted days, that reality would hit those in power: the epidemic was on a runaway trajectory.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 139). Profile. Kindle Edition.

At that meeting, the modellers offered a chilling, two-page consensus statement on how the rapidly expanding outbreak would evolve. The number of confirmed coronavirus patients entering intensive care was doubling every three to five days, meaning that hospitals in the capital would be overrun by end of March. The statement ran: ‘It is very likely that we will see ICU capacity in London breached by the end of the month, even if additional measures are put in place today.’ Breaches outside London would come one or two weeks later. None of this was a surprise to those who had been paying attention in the three previous SAGE meetings. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 140). Profile. Kindle Edition.

Lockdowns were essential, not an option.

Those deafening messages from the SAGE meetings of 13, 16, 18 and 23 March – that the country was racing dangerously up the epidemic curve, and that those in power needed to put the brakes on – finally struck home at the heart of government. At 8.30pm that night, Boris Johnson addressed the nation to announce that a legal stay-at-home order would be put in place immediately.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 141). Profile. Kindle Edition.

The UK was finally going into the kind of lockdown that Italy, France, Spain and Belgium had already enacted. As I noted in my 24 March update to Wellcome colleagues: The UK COVID19 policy finally aligned with global efforts – I do not believe you will hear the term ‘Natural herd immunity is our strategy’ again from UKG. But it had taken ten days to act – during which the doubling time of the epidemic was perhaps five days or less. The decision not to act sooner was wrong and undoubtedly cost lives. In June 2020, Neil Ferguson told the House of Commons Science and Technology Select Committee that locking down one week earlier would have halved the death toll. By the time Neil spoke, around 40,000 people in the UK had lost their lives to coronavirus. That was, on the face of it, an appalling miscalculation: 20,000 lives swapped for an extra week of liberty. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 142). Profile. Kindle Edition.

Note that UK Government delays in implementing lockdowns cost lives. For comparison, Italy went into a national lockdown on 9 March; Spain on 16 March; France on 17 March; and Belgium on 18 March, UK 26th March. We knew, but did not act.

Postponing intervention against contagion is a false economy and a drain on freedom: a country that shuts down later stays closed for longer – and risks losing the trust of its citizens that the State can protect them. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 142). Profile. Kindle Edition.

So, ironically lockdown scepticism ends up prolonging lockdowns. The health and economic consequences of the past two years is primarily down to the virus itself, not the lockdowns. One study showed that New Zealand, which locked down hard and early, "produced the best mortality protection outcomes in the OECD. In economic terms it also performed better than the OECD average in terms of adverse impacts on GDP and employment." But the evidence above from Farrar's book shows that there was no alternative to locking down, devastating though that was for many. Given its inevitability, the UK should have locked down earlier, on each occasion it ended up having to lock down.

-------------------------------------------------------------------------------------------------------------------

I hope this provides enough evidence of just how wrong lockdown sceptics are. Below I add more quotes covering failures of Government on other matters and subsequent lockdowns.

Testing was being dangerously outpaced by transmission and was proving to be the Achilles heel of the UK response. Public Health England was doing around 10,000 per week by 11 March. By comparison, Germany was doing 500,000 a week, some in drive-through centres.

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 143-144). Profile. Kindle Edition.

An epic failure of Government.

With high rates of nosocomial (hospital/care home) transmission, the obvious thing to do would have been to test all staff, including cleaners and ambulance drivers, so they could isolate if infected. But testing also presented a Faustian bargain: do we test everyone working in hospitals, plus patients and staff, knowing that maybe 25 per cent of the workforce would have to isolate and the NHS would collapse? Or do we essentially turn a blind eye? That blindness lit the touchpaper for the devastating epidemic in hospitals and care homes. Patients with the virus were discharged, untested, from hospital back into sometimes barely regulated institutional settings, where poorly paid carers work across multiple care homes. Often, hospitals had little choice but to send patients back to care homes; they had been instructed to clear beds for the coming storm.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 144). Profile. Kindle Edition.

As SAGE noted ... the UK needed a test, trace and isolate system (TTI). It had to be in place before restrictions were lifted, because TTI works best when infections are low to begin with. It is a bit like rigging up a system to detect forest fires: a small flare-up is easily spied against a quiet background but not amid a raging blaze. TTI is a textbook recommendation in public health and countries like Germany and South Korea were running it smoothly. Why couldn’t the UK?

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 145). Profile. Kindle Edition.

Ministers decided it should be run centrally and, on 7 May, Baroness Dido Harding was appointed as its unpaid chair. It was a grave error. Health secretary Matt Hancock praised her ‘significant experience in healthcare and fantastic leadership’, but I could not see what skills she brought to the role. She chaired the regulator NHS Improvement but she had no extensive experience of public health.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 145). Profile. Kindle Edition.

Another bad error.

Some of her comments to select committees since then have done nothing to soften my opinion. She claimed that nobody could have predicted the surge in September 2020, at the beginning of the second wave. That surge was obvious and had even been modelled: cases were creeping upwards just before schools opened. As Timothy Gowers says, epidemiology is sometimes about grasping basic maths.

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 145-146). Profile. Kindle Edition.

SAGE concluded later in the year that Test and Trace, launched in late May, delivered only a marginal benefit, even though many talented people dedicated great effort to trying to tackle its shortcomings. Despite the PM claiming it would be world-beating, it was not really functional nor anywhere near the capacity needed to make a difference. Contact tracing needs to reach 80 per cent of an infected person’s contacts within 48 hours to make inroads into an epidemic. The reality was closer to 50 per cent. Centralising the TTI, and bypassing local authorities, was a mistake. As anyone who has ever worked in diseases knows: all epidemics are local. Local authorities around the UK housed regional public health teams who knew their communities well and were used to contact tracing for outbreaks of food poisoning and sexually transmitted diseases.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 146). Profile. Kindle Edition.

Another error.

A related problem around that time was the NHSX app, integral to the TTI effort. Apple and Google had offered to help to deliver a tracing app, but the government insisted on keeping everything in-house. I had spoken to people like Regina Dugan, who runs Wellcome Leap, our futuristic research arm, about this approach. Regina has previously worked at Facebook and Google, and the view of people with her expertise was that any attempt to go it alone was likely to fail. That proved to be true, but the go-it-alone mentality persisted for far too long, to nobody’s benefit.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 146). Profile. Kindle Edition.

And more.

A similar lack of focus plagued thinking on ventilators: worries in March 2020 that 8,000 NHS ventilators might be insufficient led to a call for British manufacturers to design and build new basic ones at speed. Companies with no history of making medical devices, like Rolls-Royce and Dyson, were recruited to the cause. The specs changed in April, as did treatment plans, and companies that were already making such machines to regulatory standards complained they had been sidelined. Many projects were quietly dropped and the government’s order for Dyson ventilators was cancelled.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 147). Profile. Kindle Edition.

It's clear that Government action was chaotic and undirected, except to funnel taxpayers' money into VIP coffers.

It was the kind of haphazard, hour-by-hour decision-making in a crisis that wastes time, money, resources and emotional energy. Despite the best efforts of many fantastic civil servants, there was too little standing back and strategising.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 147). Profile. Kindle Edition.

The atmosphere of chaos made the government vulnerable to what looked like racketeering. I remember sitting in a Downing Street meeting hosted by Boris Johnson and being surrounded by some very good diagnostics companies who were trying to do the right thing, but also snake oil salesmen pushing rapid tests which were just useless. Everybody was just scrambling to buy whatever testing they could. There were arbitrary decisions to spend large amounts of money to order things even though everyone, including PHE, knew they were rubbish. It sometimes felt as if I had strayed on a set for The Third Man, that fantastic Carol Reed film of a Graham Greene novel, which features a black market for penicillin. At one point during April, I contacted Number 10 asking them to stop the government ordering rapid tests that were both useless and a massive distraction. At that time, there were no good rapid tests. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 147-148). Profile. Kindle Edition.

Not everything was quite so shambolic:

What was missing in March, April and May was calm strategic thinking. One bright spot was the appointment in April of Paul Deighton, the chief executive behind London’s 2012 Olympics, to solve the PPE shortage. He came in and sorted it all out very quietly behind the scenes. Another was Kate Bingham, chair of the Vaccine Taskforce, whose brio and competence stood out against a background of systemic mediocrity. It was all too common a pattern: individuals were incredibly able and willing, but were frustrated by the chaos, bureaucracy and lack of strategic direction. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 148). Profile. Kindle Edition.

On 27 March 2020, against lockdown rules, Dominic Cummings, chief adviser to prime minister Boris Johnson, drove himself and his family to his in-laws in Durham while possibly infected. He has since told a parliamentary committee that he did this because he and his family received death threats – but, whatever the circumstances, it was a disastrous mistake for someone in a public position. Cummings refused to resign – and Johnson, who himself left hospital on 12 April after recovering from Covid, did not sack him. Cummings gave a press conference defending himself in the Rose Garden at the PM’s residence. Whatever his story, he knew the rules and his actions sent a powerful signal that we were not all in this together, that the laws were different depending on who you were. As Jonathan Van-Tam, England’s deputy chief medical officer, said at the time, the rules applied to everyone. Public adherence depended on this basic principle, as the behavioural experts made clear. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 148-149). Profile. Kindle Edition.

Yes, one rule for us, another for them started with Cummings, and continued with Partygate. Meanwhile the libertarians who wanted to let the epidemic rip were targeting scientists like Neil Ferguson, who had been caught foolishly breaking lockdown rules (unlike Cummings, the Government were quick to condemn Ferguson's actions).

The libertarians hated him because they saw his numbers, wrongly, as the driving force behind lockdowns, even though he was just one among several modellers. So they borrowed from the playbook used by the tobacco lobby and climate change sceptics: first, undermine the individual, then undermine the data and the advice. Dig out other scientists ready to give a so-called expert view that is diametrically opposed. The aim is to cast enough doubt on the science to sow public confusion. Presenting data and graphs of likely scenarios with confidence intervals was the right thing to do but it enabled critics to seize on the extremes, especially projections, to discredit perfectly reasonable central estimates.

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 149-150). Profile. Kindle Edition.

Neil was quoted as saying:

We’ve always had our fair share of conspiracy theorists and others on the fringes of the internet but what has been particularly disappointing is that media outlets have taken an avowedly political perspective. Their editorial departments seem to be less interested in the truth than in a political agenda and cherrypick things accordingly. It’s been shocking to see that in the UK.’

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 150). Profile. Kindle Edition.

Later in the year, we saw the Government making the same mistakes they made at the beginning of lockdown, but in reverse:

On 10 May 2020, I updated my colleagues with my worries about the apparent lifting of lockdown. That day, the PM had urged people who could not work from home to return to the office while avoiding public transport. Lifting lockdown was a prospect that alarmed SAGE, given how high infections were still running:

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 151). Profile. Kindle Edition.

The rest of May felt like a struggle to keep the floodgates shut. R was hovering stubbornly around 0.7–1.0. It needed to stay below 1 for the epidemic to shrink. But TTI was not properly in place and the UK was racking up as many as 9,000 new cases every day. Loosening restrictions while infections were running that high would quickly swamp the system. Schools would have a phased reopening from 1 June 2020, with non-essential retail following on 15 June. SAGE feared that multiple sectors – schools, retail, hospitality – would all be flung open in quick succession or all at once, rather than spacing them out to test the effect of each restriction on transmission. That would throw multiple canisters of fuel on to a viral fire that was waiting to be stoked again. In pressing to reopen, the PM might have been trying to meet his own timetable. Back on 19 March, Johnson declared that the UK could ‘turn the tide on coronavirus’ in 12 weeks. The deadline of 11 June was looming. SAGE believed that data, not dates, should set the timetable. Despite claims by the government that it was ‘following the science’, it was not. As in March, it was treading its own less cautious route under the cover of science. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 152). Profile. Kindle Edition.

So the UK Government locked down too late and opened up too early. There can't be much doubt that was because of the libertarian bent of the Tories in power.

On 31 May, a day that saw more than 1,000 coronavirus infections added to the national tally, I spelled out to my Wellcome colleagues what a mess the UK was in: People may have lost their trust in authority. No other country in Europe has lifted their restrictions with the case numbers UK has now. All countries had total daily new cases in the 100s when they lifted restrictions and all had more robust surveillance in place.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 152). Profile. Kindle Edition.

What infuriated me was the lack of honesty with the public. SAGE advice was unanimous: infections were high, TTI was crawling along at a snail’s pace, the app was a disaster, and any release, let alone multiple loosenings, would trigger a rise in cases that would be tough to track and contain. Ministers were not following the science, even if they said they were. Governments owe it to people to be clear about when they are following advice and when they are rejecting it. They must shoulder responsibility for the decisions and be upfront about the possible trade-offs. The public should have been warned that cases would rise as restrictions eased. Instead, they were led to believe the epidemic was over.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 153). Profile. Kindle Edition.

Then there was Eat Out to Help Out:

I wrote to my Wellcome colleagues, somewhat fearfully, that I was ‘concerned that everyone has interpreted ‘lock-down is over’’’. That was certainly the mood music. Pubs and restaurants threw open their doors on 4 July; newspapers celebrated the UK’s ‘Independence Day’ from the virus. On 3 August, the ‘Eat Out To Help Out’ scheme began. Diners would receive hefty discounts on meals served in venues but not for takeaways. John renamed it ‘Eat Out to Help the Virus Out’. Mike Ferguson at Wellcome called it ‘Eat Out to Spread It About’. By then, the UK Covid-19 death toll exceeded 46,000.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 156). Profile. Kindle Edition.

The effect was to create a tinderbox. Case numbers started rising through the months of July and August 2020. Minutes of a SAGE meeting held on 6 August noted: ‘Considering all available data, it is likely that incidence is static or may be increasing, meaning R may be above 1 in England.’

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 156). Profile. Kindle Edition.

In August, news emerged that Government was going to scrap Public Health England:

Everything from July onwards was heading in the wrong direction. And then, on 16 August, news leaked that Public Health England was going to be abolished. Even worse, Dido Harding, who had failed to establish the world-beating TTI system promised over the summer, was appointed interim executive chair of PHE’s replacement, the National Institute for Health Protection. I just could not believe that Public Health England (PHE) was being thrown under the bus in the middle of a pandemic while the figurehead responsible for the TTI system was being promoted. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 169-170). Profile. Kindle Edition.

PHE was, in effect, being blamed for the coronavirus crisis, which at best was passing the buck. At the very least, it was disingenuous.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 170). Profile. Kindle Edition.

Farrar tweeted:

Arbitrary sackings. Passing of blame. Ill thought through, short term, reactive reforms. Out of context of under investment for years. Response to singular crisis without strategic vision needed for range [of] future challenges. Pre-empting inevitable public enquiry.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 170). Profile. Kindle Edition.

And he updated his Wellcome colleagues:

June–August was not used well enough to put in place what was needed, too much optimism that the worst was over and it could not be so bad again, continued focus on short term tactics, defending the indefensible, confirmation bias, and the lack of any central leadership or strategy ... Time has been wasted with distractions of ‘moonshots’, blaming the young or travellers/borders, the public enquiry, getting rid of PHE damaging morale of the very people who will be needed over the [next] 6 months, not preparing the NHS, TTI is very close to collapse at the moment... If it can be prevented what needs to happen? (What should have happened June–August) Get the ‘boring’ basics right and ready for autumn/winter, implementing what we know works and just do it well Value competence above rhetoric Be honest and transparent about the situation and what is needed Narrow the gap between the advice, what we know needs to happen and the capacity to implement it Strengthen the Cabinet Office, No 10, or a new grouping to oversee this, not driven by political announcements but by making a real difference Does this need a cross party, national emergency crisis approach? Admit not everything is working, conduct an immediate review, within a week reset a real, joined up strategy... Stop trying to pretend ‘it’s all world beating’ – it is not and everyone knows it, repeating that only loses more trust... (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 171). Profile. Kindle Edition.

In September SAGE met again:

The advice from that SAGE meeting was unambiguous: ‘A package of interventions will be needed to reverse this exponential rise in cases…’ The measures included a ‘circuit breaker’, or a short lockdown; advice to work from home; banning the mixing of households, except those in support bubbles; closing cafés, bars, restaurants, indoor gyms and personal services, such as hairdressers; and moving university and college learning online.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 172). Profile. Kindle Edition.

Dominic Cummings has shed disturbing new light on the events in September 2020 and the efforts made to persuade Johnson that a circuit breaker was needed. By that time, Cummings said, there was unanimity among Patrick, Chris, Ben Warner and John Edmunds that intervention was needed. The Prime Minister did not want to act. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 173). Profile. Kindle Edition.

Cummings claims that, in order to try to change Johnson’s mind, he organised a meeting on Tuesday 22 September 2020, at which Johnson was presented with the case numbers and infections rates by Catherine Cutts, a data scientist newly recruited to Number 10. She showed Johnson the current data and then fast-forwarded a month, to role-play the scenario of infections and deaths projected for October. Cummings says: ‘We presented it all as if we were about six weeks in the future. This was my best attempt to get people to actually see sense and realise that it would be better for the economy as well as for health to get on top of it fast. Johnson basically said, “I’m not doing it. It’s politically impossible and lockdowns don’t work.”’ (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 173-174). Profile. Kindle Edition.

So, there you have it; lockdown scepticism from the PM of the UK, during the most dangerous public health crisis in living memory. Lockdowns do work (otherwise what was happening in Spring 2020?), but they were politically difficult for him, and his libertarian fellows.

Johnson reportedly told Cummings that he should never have locked down in the first place and that he felt Cummings had manipulated SAGE into calling for the March lockdown. This allegation that SAGE was ‘manipulated’ is untrue. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 174). Profile. Kindle Edition.

Whatever happened behind the doors of Number 10, the government chose not to act in September 2020. Or, rather, it chose to not impose a lockdown – only a 10pm curfew and a request for people to work from home. I respect the mantra that scientists advise and ministers must decide, but ministers were clearly overriding SAGE advice, often while claiming to follow it. Not acting is a decision in itself – and it had awful consequences. This was not March 2020, where, if you were exceptionally charitable, you could just about claim that we did not know the epidemic was coming and the data was poor. Back in March 2020, ministers could, at a stretch, have said they did not want to make a decision as grave as locking down the country on the basis of infectious disease modelling and the possibly overblown fears of public health doctors. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 174). Profile. Kindle Edition.

Six months later, in September 2020, the government had no such excuse. We had already been through it. We knew what a lockdown could achieve – and the terrible impact of delaying it. There was no way that the lack of action could be blamed on poor data: by the autumn of 2020, the UK were collecting some of the best epidemiological data in the world. And that data was clearly showing the epidemic was climbing, week after week after week. R was above 1 (at the next SAGE meeting, on 24 September, it was recorded in the minutes as lying between 1.2 and 1.5). (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 174-175). Profile. Kindle Edition.

The SAGE meeting on 22 October was ... unequivocal. The minutes laid out the signposts towards that winter disaster: an epidemic growing exponentially; an R of between 1.2 and 1.4; modelling that was showing between 53,000 and 90,000 new infections a day in England. Surveys suggested an average of 433,000 people were, at that moment, infected just in England.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 179). Profile. Kindle Edition.

If scientists were advocating a lockdown, many politicians definitely were not. On 4 November, I was invited to talk via Zoom to the Covid Recovery Group, a group of 80 or so Conservative MPs in the UK Parliament. (I briefed the Shadow Cabinet on another occasion). I explained why tight restrictions were needed, highlighting rises in infections, hospitalisations and deaths. They asked questions like, ‘What should I say to my constituents who don’t see much Covid?’ I kept coming back to the point that you can either act now for a shorter time or you can act later for longer. You have a choice, but only when it comes to timing: you cannot choose whether to act or not. That is exactly the message that I have been giving to Emmanuel Macron from late 2020. We cannot begin to comprehend the anguish of a leader who is deciding whether to shut down his or her country, but the later the action, the more lives that will be lost and the more disruption to all sectors of society: schools, businesses, leisure, transport. Governments are eventually forced to act because they cannot simply stand by and watch their health systems collapse, as happened in the UK in March 2020. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 180-181). Profile. Kindle Edition.

The MPs listened respectfully and, in a passive-aggressive sort of way, were apparently thankful for me appearing before them. But did I change anyone’s mind? No. Their minds seemed already made up and I believe the majority went on to vote against restrictions.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 181). Profile. Kindle Edition.

Of course; because their objections were ideological, not based on reality.

I have thought deeply about why my scientific world view was anathema to those MPs. One reason is ideology: libertarianism is one of their guiding principles. Lockdowns are a sign of big government and undoubtedly curb individual freedoms in a draconian way that none of us want. But the alternative is worse, as we have discovered.

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 181). Profile. Kindle Edition.

On the Great Barrington Declaration:

The Great Barrington Declaration was ideology masquerading as science and the science was still nonsense. There was no evidence to support its central idea that herd immunity was a viable strategy. Earlier in the year, one of its scientists, Sunetra Gupta, had claimed that half of the UK had already been infected and therefore the UK was on its way to acquiring herd immunity. The antibody data showed nothing of the sort; only 6 per cent of the UK population had been infected by September 2020, nine months into the epidemic. At that point, the Declaration believers explained away the discrepancy between their theory and the antibody data by suggesting people were protected by ‘immunological dark matter’. It was an evidence-free assertion, as was the insistence by the Great Barring[t]on supporters that there would be no second wave. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 182). Profile. Kindle Edition.

Dominic Cummings claims he had wanted to run an aggressive press campaign against those behind the Great Barrington Declaration and to others opposed to blanket Covid-19 restrictions, such as Carl Heneghan (an Oxford University professor and GP), the oncologist Karol Sikora and Mail on Sunday columnist Peter Hitchens. Cummings says: ‘In July, I said to [Johnson], ‘Look, much of the media is insane, you’ve got all of these people running around saying there can’t be a second wave, lock-downs don’t work, and all this bullshit. Number 10’s got to be far more aggressive with these people and expose their arguments, and explain that some of the nonsense being peddled should not be treated as equivalent to serious scientists. They were being picked up by pundits and people like Chris Evans [the Telegraph editor] and Bonkers Hitchens [Peter Hitchens].’ According to Cummings, Johnson rejected the idea of being more aggressive with the media, saying, ‘The trouble is, Dom, I’m with Bonkers. My heart is with Bonkers, I don’t believe in any of this, it’s all bullshit. I wish I’d been the Mayor in Jaws and kept the beaches open.’ (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 182-183). Profile. Kindle Edition.

For the record, nobody is pro-lockdown. Lockdowns are a last resort, a sign of failure to control the epidemic in other ways. Locking down does not change the fundamentals of a virus but buys time to increase hospital capacity, testing, contact tracing, vaccines and therapeutics. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (p. 183). Profile. Kindle Edition.

Those behind the declaration, who managed to recruit people running the country to their cause, have done a great disservice to science and public health (that is the price one pays for the principle of academic freedom). There was no data to support their theory, as immunology studies bear out. Danny Altmann, professor of immunology at Imperial College in London, described the central tenet of the Great Barrington Declaration as ‘nonsense’, and added that he could not see immunologists among the signatories. ‘It was unfortunate that their views could command such attention,’ Danny says. Nonetheless, they got a lot of airtime and had the ear of those in government, particularly in the US and UK, who shared the same optimism bias for an easy solution. Frankly, I think their views and the credence given to them by Johnson were responsible for a number of unnecessary deaths. (my emphasis)

Farrar, Jeremy; Ahuja, Anjana. Spike (pp. 183-184). Profile. Kindle Edition.

Well, there's lots more where that comes from, but I've reached my copy limit on the book. But hopefully there is enough there to show that Farrar considers the UK Government's response to the pandemic to be inadequate in many respects, anti-science, and ideological. No-one wants lockdowns (what an idea!) but people want to do the best thing for the general population. In the absence of vaccines, lockdowns were and are necessary in the circumstances of the COVID pandemic.